You are comparing the difference between Microsoft 365 Copilot and Microsoft 365 Copilot Chat.

What is available in both versions?

Correct Answer:

B

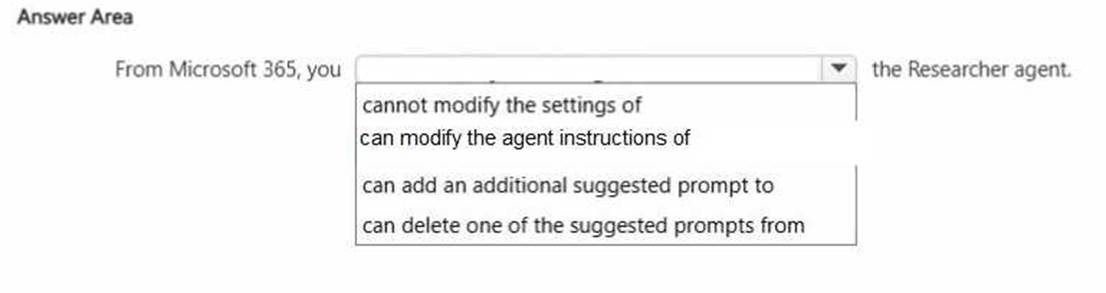

Select the answer that correctly completes the sentence.

Solution:

The correct completion is ??cannot modify the settings of?? because built-in agents such as the Researcher agent in Microsoft 365 Copilot are system-defined experiences. These agents are designed and managed by Microsoft to ensure consistent functionality, security, compliance, and alignment with responsible AI standards.

While users can interact with Researcher, provide prompts, and refine outputs during conversations, they cannot directly modify the core system settings or configuration of the built-in agent from Microsoft 365. Administrative configuration and deeper customization are available when creating custom Copilot agents through approved development tools, but default Microsoft-provided agents have controlled configurations.

Options such as modifying agent instructions, adding prompts, or deleting suggested prompts imply direct structural customization of the built-in agent interface, which is not supported in the standard Microsoft 365 Copilot experience.

This reflects a key generative AI governance principle in enterprise environments: system-level controls remain centrally managed to maintain data protection, compliance, and responsible AI implementation across the organization.

Does this meet the goal?

Correct Answer:

A

You are discussing Microsoft 365 Copilot with a colleague. The colleague asks which data Copilot uses to answer questions when using the Work scope.

What should you tell your colleague? A. Copilot provides responses based only on the general knowledge that Copilot was trained on.

A. Copilot provides responses based only on the general knowledge that Copilot was trained on. B. Copilot provides responses based on all the data in your organization's Microsoft 365 environment and the general knowledge that Copilot was trained on.

B. Copilot provides responses based on all the data in your organization's Microsoft 365 environment and the general knowledge that Copilot was trained on. C. Copilot provides responses based only on data that the user can access and the general knowledge that Copilot was trained on.

C. Copilot provides responses based only on data that the user can access and the general knowledge that Copilot was trained on. D. Copilot provides responses based only on data that the user can access.

D. Copilot provides responses based only on data that the user can access.

Correct Answer:

C

Microsoft 365 Copilot operates within two primary knowledge boundaries: its foundational large language model training data and the organizational data available within the Microsoft 365 tenant. However, Copilot strictly enforces Microsoft's security and compliance model, meaning it only retrieves and uses data that the signed-in user is authorized to access.

When using the Work scope, Copilot combines the general knowledge it was trained on with organizational data such as documents, emails, chats, calendars, and files stored in Microsoft 365. Importantly, Copilot respects role-based access control and existing permissions. It does not surface information from content the user does not have access to.

Option A is incomplete because Work scope includes organizational data. Option B is incorrect because Copilot does not access all tenant data indiscriminately; it is permission-scoped. Option D is incomplete because Copilot also leverages its general training knowledge.

Therefore, the correct explanation is that Copilot provides responses based only on data the user can access, combined with its general training knowledge.

You sign in to the Microsoft 365 Copilot app by using your work account as shown in the exhibit.

A colleague tells you that when they open the Microsoft 365 Copilot app, they have access to the Researcher agent. You need to access the Researcher agent.

What should you do?

Correct Answer:

B

You receive the following response to a prompt: "Sorry, it looks like I can't respond to this. Let's try a different topic."

What is a possible cause of the response?

Correct Answer:

B

Microsoft 365 Copilot follows Microsoft's Responsible AI principles and enforces strict content safety policies. When a prompt violates safety guidelines---such as containing harmful, abusive, illegal, or restricted content---the system may refuse to generate a response. The refusal message shown is consistent with safety filtering behavior.

Generative AI systems include moderation layers that evaluate prompts before generating output. If the prompt is classified as unsafe or non-compliant with policy, Copilot blocks the request and encourages the user to try a different topic.

A vague prompt typically results in a generic or clarifying response rather than a refusal. There is no fixed limit of five requests per prompt. Exceeding the context window usually results in truncation or processing errors, not a safety-based refusal message.

Therefore, the most likely cause of the response is that the prompt contains language that violates safety guidelines.